Microsoft, a significant investor in OpenAI, temporarily blocked its employees from accessing ChatGPT, OpenAI’s renowned AI tool.

The blockage was later identified as an inadvertent outcome of security system tests for large language models (LLMs).

Microsoft Briefly Blocks ChatGPT Use

Microsoft’s move came to light on a Thursday when the software giant’s employees found themselves barred from using ChatGPT. Microsoft announced internally:

“Due to security and data concerns a number of AI tools are no longer available for employees to use.”

The restriction was not exclusive to ChatGPT but extended to other external AI services like Midjourney and Replika.

Despite the initial confusion, Microsoft reinstated access to ChatGPT after identifying the error. A spokesperson later clarified,

“We were testing endpoint control systems for LLMs and inadvertently turned them on for all employees.”

Microsoft encouraged its employees and customers to use services like Bing Chat Enterprise and ChatGPT Enterprise, emphasizing their superior privacy and security protections.

The relationship between Microsoft and OpenAI is a close one. Microsoft has been leveraging OpenAI’s services to enhance its Windows operating system and Office applications, with these services running on Microsoft’s Azure cloud infrastructure.

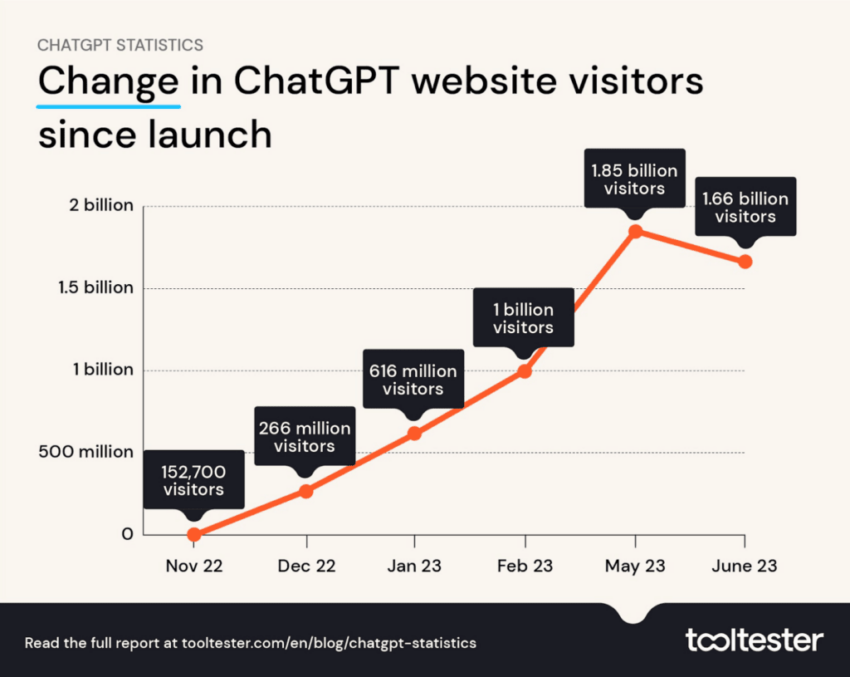

ChatGPT, a service with over 100 million users, is renowned for its human-like responses to chat messages.

It’s been trained on an extensive range of internet data, which has led to some companies restricting its use to prevent the sharing of confidential data. Microsoft’s update recommended the use of their own Bing Chat tool, which also relies on OpenAI’s artificial intelligence models.

Weighing the Risks

In the wake of these events, OpenAI, the parent company of ChatGPT, has launched a Preparedness team. This team, led by Aleksander Madry, the director of the Massachusetts Institute of Technology’s Center for Deployable Machine Learning, aims to assess and manage the risks posed by artificial intelligence models.

These risks include individualized persuasion, cybersecurity, and misinformation threats.

Read more: 11 Best ChatGPT Chrome Extensions To Check Out in 2023

OpenAI’s initiative comes at a time when the world grapples with the potential risks of frontier AI, defined as:

“Highly capable general-purpose AI models that can perform a wide variety of tasks and match or exceed the capabilities present in today’s most advanced models.”

As AI continues to evolve and shape our world, companies like Microsoft and OpenAI are making efforts to ensure its safe and responsible use while also pushing the boundaries of what AI can achieve.

Disclaimer

In adherence to the Trust Project guidelines, BeInCrypto is committed to unbiased, transparent reporting. This news article aims to provide accurate, timely information. However, readers are advised to verify facts independently and consult with a professional before making any decisions based on this content.

This article was initially compiled by an advanced AI, engineered to extract, analyze, and organize information from a broad array of sources. It operates devoid of personal beliefs, emotions, or biases, providing data-centric content. To ensure its relevance, accuracy, and adherence to BeInCrypto’s editorial standards, a human editor meticulously reviewed, edited, and approved the article for publication.

Leave a Reply