Ethereum co-founder Vitalik Buterin has been discussing Web3 security, highlighting its increasing importance in a world where deepfakes are becoming more prevalent.

On February 9, Buterin cited a recent report regarding a company that lost $25 million. This occurred when a finance worker was duped by a convincing deepfaked video call.

Web3 Security Highlighted in Deepfake Threats

Deepfakes, which are AI-generated fake audio or videos, are becoming more prevalent. Moreover, they make it unsafe to authenticate people solely based on seeing or hearing them, he said.

“The fact remains that as of 2024, an audio or even video stream of a person is no longer a secure way of authenticating who they are,” Buterin said.

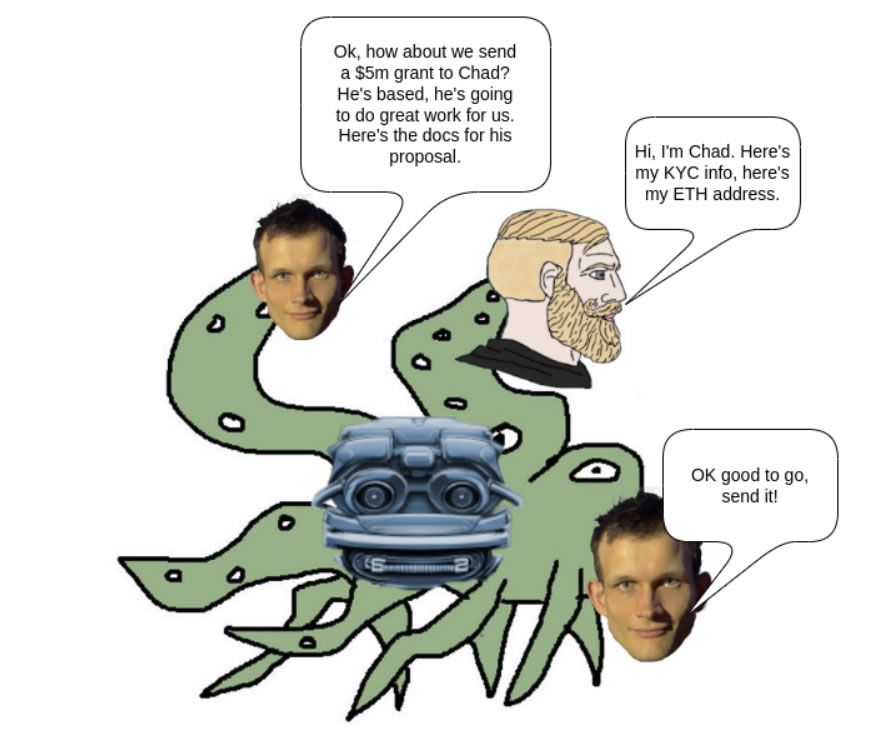

Cryptographic methods like signing messages with private keys are not enough, Buterin noted, explaining that they bypass the purpose of having multiple signers verify identity. Nevertheless, asking personalized “security questions” based on shared experiences is an effective way to authenticate someone’s identity, he added.

Good questions are unique, difficult to guess, and probe the “micro” details people remember.

“People will often stop engaging in security practices if they are dull and boring, so it’s healthy to make security questions fun,” Buterin suggested.

Security questions should be combined with other techniques, he said. These could include pre-agreed code words, multi-channel confirmation of info, man-in-the-middle attack protections, and delays or limits on irreversible actions.

Read more: 15 Most Common Crypto Scams To Look Out For

Individual-to-individual security questions differ from enterprise-to-individual such as bank security questions, and should be tailored to the people involved.

Buterin concluded that no one technique is perfect. However, layering techniques adapted to the situation can provide effective Web3 security even in a world where audio and video can be faked.

“In a post-deepfake world, we do need to adapt our strategies to the new reality of what is now easy to fake and what remains difficult to fake, but as long as we do, staying secure continues to be quite possible,” Buterin emphasized.

On February 9, it was reported that deepfake voices, images, and other manipulated online content have already made a negative impact on this year’s US elections. The White House is seeking ways to verify all its communications and prevent various forms of generative AI fakery, manipulation, and abuse, it revealed.

Last month, the World Economic Forum (WEF) revealed that AI-generated misinformation and deepfakes was the world’s greatest short-term threat. Also in January, MicroStrategy founder Michael Saylor warned about deepfakes featuring him angling to scam users out of their Bitcoin.

Disclaimer

In adherence to the Trust Project guidelines, BeInCrypto is committed to unbiased, transparent reporting. This news article aims to provide accurate, timely information. However, readers are advised to verify facts independently and consult with a professional before making any decisions based on this content. Please note that our Terms and Conditions, Privacy Policy, and Disclaimers have been updated.

Leave a Reply